Spring of 2016 I enrolled in my first ever graduate level data science course at the School of Information at UC Berkeley. The course ‘Deconstrucing Data Science’ investigated quantitative methods of machine learning and data analysis. Coming from a humanist background, the course challenged me to think in drastically different ways about evidence, data, and argument. In the process of learning new data science methods, we reflected on experimental design and challenged the underlying assumptions of empirical methods. These critical reflections resonated with similar debates around the ‘scientific’ character of history and the social sciences to draw informed conclusions about the past and society.

This new course was taught by natural language processing, computational linguistics, machine learning, and digital humanities extraordinaire Professor David Bamman. Bamman’s diverse background in classics and computer science offered a critical and humanistic approach to the new and developing field of data science.

As I have explained before, quantitative methods and scientific reasoning deeply shape my research:

“I am interested in the colonial and post-colonial history of Vietnamese libraries in the twentieth century. I do not just do ‘techie’ things on the side, or have a ‘digital humanities component’ to my Ph.D. dissertation in history. The world of digital humanities, experimental design, and quantitative methods is deeply interwoven into my thinking and research. All of these seemingly disparate fields have converged and push me to investigate my methods of questioning, explanation, and discovery.”

This data science course helped me to build a foundation of critical thinking in experimental design as well as learn and apply new data science techniques to answer my humanistic research questions.

A Humanist does ‘Tech Stuff’—Actually more like engaging in critical inquiry and experimental design

Although the class attempted to bridge disciplinary boundaries, by the end of the semester, I was the only ‘humanities’ student who was still enrolled in the course. Most graduate students came from the School of Information, computer science, or quantitative social sciences backgrounds. My so-called ‘tech’ background up to that moment was piece-meal and I often dealt with a certain imposter syndrome and sense of not-belonging. (See the roadmap of my ’Tech Training’) However, Professor Bamman sought to facilitate an inclusive environment predicated on critical inquiry and methodological debate.

While on the surface we were introduced to new machine learning methods, linear regression, and probabilistic models, on a deeper level we were learning how to ask questions and how to answer them. A research question needed to be specific and translate into operationalizable tasks. Data to answer these tasks needed to exist, but not without critical inquiry into its production and its limitations.

I appreciated these conceptual discussions because it brought us out of the tech weeds of “Let’s do this,” to think about “Why do this?” In other words, we were encouraged to resist quick technical implementation without critical reflection. When tasked with a research problem and data science homework, we were reminded to ask the big questions of ‘Why?’ and ‘What does this actually answer?’ throughout all the technical steps.

Our Final Project: ‘Deconstructing Libraries’

For the final class project, we were given free reign to implement the new methods we learned onto a domain specific project.

I teamed up with Jordan Shedlock (See his awesome final project, a data visualization of the Robbins Collection), a Master’s of Information Management and Systems student who had a diverse background in Russian studies, libraries, and digital humanities. Jordan was incredibly knowledgeable, patient, and introduced me to the power of python and Regular Expressions for cleaning data for our project.

In this post, I would like to share with you our process of operationalizing humanistic questions, introduce a few tools that we used to clean and read data, and provide some concluding lessons. If you would like to understand in detail our argumentative process, experiment, and findings, our final report, data source, and code is available on GitHub to tinker and explore. I would love to receive feedback on the project since I am still continuing working on quantitative analysis of library bibliographies and catalogs in my dissertation work.

- GitHub Repository (Contains all data, code, final report and slides presentation)

- Final Report

- Slides Presentation

Introduction

Our project developed as a proof of concept for my dissertation work on the history of libraries in Vietnam (1887-1986). I was interested in a lot of big, conceptual questions such as the following:

- How does the library develop as an institution of knowledge in Vietnam?

- In what ways do Vietnamese libraries transform through political regime changes?

- How do libraries express colonial and post-colonial state power as well as subvert it?

And a bunch of other interrelated themes:

- Library curation

- History of publishing in Vietnam

- Circulation of knowledge on ‘Vietnam’

These questions and themes are core to my dissertation and archival research, and I kept them at the foundation of the final project for our Data Science class. However for our project we focused on a small aspect of these questions that could be designed, testable, and answerable in an experiment. This required the following components:

- Informative and interesting data

- Testable hypotheses

- Experimentation

- Results

Finding Data

One of the first steps to design our project was to find data. For the humanities and history, this stage is one of the most challenging because often, informative and interesting data is difficult to find and not well-structured. For example, humanities data could vary and take the shape of the following:

- Differently formatted fifteenth century handwritten letters

- Images with uneven metadata information

- Published bibliographies in book or PDF format

The challenge of humanities data is that from the start, the data can be difficult to attain, uneven, unformatted, and thus not easily machine readable. This perpetuates the production of scholarship that rely on ‘data of convenience’—well-structured data often in the English language.

For our project, we sought to contribute new work within digital humanities not drawn from data of convenience.

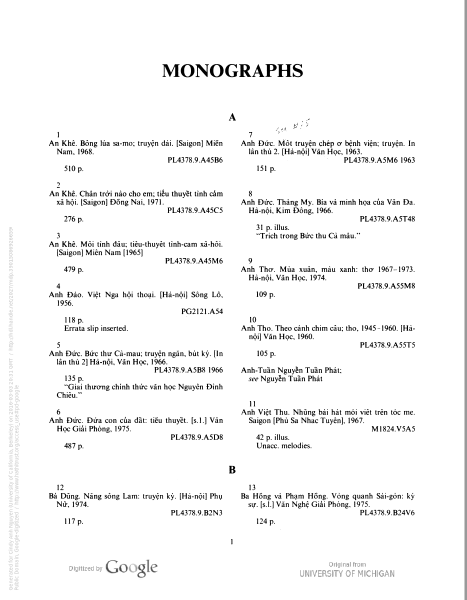

Our data source was the published bibliography of Vietnamese holdings in the Library of Congress from 1979-1985. This bibliography was published in two parts, in 1982 and 1987 (supplement), digitized by University of Michigan, and available on HathiTrust Digital Library (HathiTrust 1982, 1987)

In other words, our data source was messy, non-English, and never before used to conduct this this type of quantitative analysis.

Furthermore, the data source was important and directly related to my questions regarding library curation, history of Vietnamese publishing, and the circulation of knowledge. This data source shed light onto the knowledge of “Vietnam” available to Americans through the Vietnamese-language collection at the Library of Congress. This bibliography was compiled by A. Kohar Rony of the Southern Asia Section of the Asian Division of the Library of Congress.

In the preface, Rony explained that a great surge of public interest on Vietnam had continued even after the end of the Vietnam War. This is due to the fact that after 1975, 1) a large community of Vietnamese refugees had settled in America were interested and invested in access to this collection and 2) American researchers were banned from field research in Vietnam and thus relied heavily on the collections of the Library of Congress.

The works included in the 1982 and 1987 bibliography list the acquisitions to the collection at the Library of Congress from 1979-1985. The 1982 bibliography includes Vietnamese language materials the LOC collected up to June 1979. The 1987 bibliography includes Vietnamese language materials collected by the LOC from 1979 to 1985. Thus, the collections include both retrospective works and newer items published in the post-war Socialist Republic of Vietnam.

According to the official statements by the Library of Congress, no further detail is provided on collection policies and the building of the collection.

Thus our project sought out to analyze and understand the following aspects about the collection:

- The types of works available in the collection and available to Americans on the subject and related to ‘Vietnam’

- The logic of the collecting regime

- The types of materials published in Vietnam

Cleaning Data – A long aside

While it was exciting to work with never before used data, it meant that much of our time was spent cleaning and restructuring the data to be machine readable.

The bibliographies provided rich metadata on the collection:

- Author

- Title

- Publisher

- Publishing location

- Publishing Date

- Library of Congress Classification code (LCC)

But in order to have the data in a clean format ready to analyze, we had to use the following methods:

- Optical Character Recognition: We used Abbyy Finereader for Vietnamese, English, and French to recognize the the PDF files and exported them into text files.

- OpenRefine: The OCR output was far from perfect. Thus we had to semi-manually clean up data fields. Furthermore, Open Refine provided a way to find a pattern in the mistakes. Most of the mistakes were due to the following:

- Vietnamese diacritical marks were not consistently recognized (mistaking the following à-ã-â-ă-á)

- Line breaks in the original PDF resulted in inconsistent spacing

- Variation between the Vietnamese and English spelling of a city (Saigon and Sài Gòn). The process of ‘cleaning’ data fields or combining or distinguishing data entries as unique were important decisions. For example, do we mark Saigon and Hồ Chí Minh City as the same location? What do we lose by conflating the values? How do we produce consistent values and taxonomy without over-flattening the data?

- vnTokenzier: Vietnamese is a Polymorphemic language. For example, the world revolution = cách mạng has two word tokens. In order for our data analysis to recognize the multi-token words as one word instance, we had to ’tokenize’ the words. For example, the word revolution would be tokenized into “cách-mạng”.

- “vnTokenizer — Vietnamese Word Segmentation | Lê Hồng Phương.” Accessed May 4, 2016. vnTokenizer segments Vietnamese into lexical units with an accuracy of 98% on a test set extracted from the Vietnamese treebank.

- Regular Expressions: We used regular expressions to extract important data such as author, title, year, publisher, location.

The final output of our data clean up were tab separated text files formatted in UTF-16 in lower-case.

Operationalizing the Research Questions

After cleaning our data, we realized that we could only focus on the most consistently read and most informative part of our data—the titles and their city of publication. From here, we developed three main hypotheses:

H1: Hanoi will have more topics on Communism, war, revolution, and army than Saigon.

H2: Saigon will have more topics on US ideas (modernity, democracy, anti-Communism) than Hanoi.

H3: Library of Congress (LOC) collection will prefer Saigon (United States ally) materials over Hanoi.

To operationalize our hypothesis into a probabilistic problem, we ask the following question: What are the words in a title most characteristic of a publication city?

Our hypotheses rest upon the important underlying assumption that a publication title can reveal important information about a work’s content, potential audience, and literary style. Although not a substitute for reading the entirety of the work, a work’s title reveals negotiated information between the author, the perceived audience, the publisher, and in this case, the library collection.

As explained in the final report, we used three different methods to explore and test out this question. Frequency counts, topic models, and Naive Bayes. Each of these methods pushed us to iterate our initial hypotheses as well as critically reflect on our data source.

Concluding Lessons

The main lessons from this project for me were twofold:

First, data cleaning is not only time-intensive but also intellectually rigorous and rewarding.

Second, probabilistic methods are a powerful way of thinking about relationships and causation.

Even if I never reached the stage of data analysis, data cleaning provided incredible insight to my research question. By cleaning the OCR output, I was forced to familiarize myself with the data at an intimate level. I discovered interesting works from the 1960s to 1980s such as the military training pamphlets by the North Vietnamese army, Buddhist texts from the diaspora Vietnamese community, and the volumes of collected literary works of South Vietnam. I was confronted with difficult taxonomy decisions such as conflating politically sensitive vocabularies such as Saigon and Ho Chi Minh City. I reflected on the type of information that can be conveyed by Library of Congress Classification code (LCC), titles of a work, and cities of publication. These revelations opened up new and significant avenues of my research into the role of libraries and the circulation of knowledge on Vietnam.

Out of all the methods attempted in this project, Naive Bayes proved to be a useful way of thinking about argument and explanation. Why is probability and probabilistic thinking important? Because it is the quantification of uncertainty.

Our initial question, “What are the words in a title most characteristic of a publication city?” could be answered probabilistically. Naive Bayes offered a more structured and convincing method of analyzing the difference in word distributions among Saigon and Hanoi records. We calculated the probability of the words’ appearance conditioned upon its publication city.

Among the most likely tokens for Hanoi were words associated with Communist rhetoric, such as cách mạng (revolution), nhân dân (people), xây dựng (build), and anh hùng (hero). In comparison, the Saigon tokens included more words that could be seen as democratic or nationalist, e.g. công dân (citizen), phật giáo (Buddhism), quê hương (homeland), and hiện đại (modern). There are a lot of assumptions with using Naive Bayes that are worth mentioning, the main one in this case is that Naive Bayes assumes that all words are independent variables. In fact we know that titles and phrases words are in fact interdependent.

This conclusion is exciting in that it offers a probabilistic method to think about the relationship between library collections, topics of works, and their publication location.

| Hanoi | Saigon | |||||

| cách_mạng | 0.002979032 | revolution | truyện_dài | 0.004803892 | long story | |

| truyện_ngắn | 0.001986021 | short story | truyện_ngắn | 0.000729705 | short story | |

| nhân_dân | 0.001871443 | people | giáo_dục | 0.000668896 | education | |

| minh_họa | 0.001795058 | illustrate | cách_mạng | 0.000547279 | revolution | |

| dân_tộc | 0.001527709 | nation | công_dân | 0.00048647 | citizen | |

| truyện_ký | 0.001413131 | memoir | văn_hóa | 0.00048647 | culture | |

| xây_dựng | 0.001413131 | build | văn_học | 0.00048647 | literature | |

| anh_hùng | 0.001298552 | hero | chú_thích | 0.00048647 | note | |

| công_tác | 0.00126036 | activity | chúng_ta | 0.000425661 | we | |

| nghiên_cứu | 0.001183974 | research | phật_giáo | 0.000425661 | Buddhism | |

| giới_thiệu | 0.001145782 | introduction | giới_thiệu | 0.000364853 | introduction | |

| nhiệm_vụ | 0.001069396 | duty | cộng_hòa | 0.000364853 | republic | |

| biên_soạn | 0.000993011 | compile | xã_hội | 0.000364853 | society | |

| khoa_học | 0.000993011 | science | phiên_dịch | 0.000364853 | translate | |

| văn_học | 0.000993011 | literature | quê_hương | 0.000364853 | homeland | |

| xã_hội | 0.000916625 | society | con_người | 0.000304044 | person | |

| nông_nghiệp | 0.000878433 | agriculture | hiện_đại | 0.000304044 | modern | |

| lịch_sử | 0.000802047 | history | giáo_sư | 0.000304044 | professor | |

| nghệ_thuật | 0.000802047 | art | khuôn_mặt | 0.000304044 | face | |

| chú_thích | 0.000802047 | note | cuộc_đời | 0.000304044 | lifetime | |

| bổ_sung | 0.000725662 | supplement | lịch_sử | 0.000304044 | history |

Table 1. Tokens with strongest Hanoi and Saigon probabilities. “Ideologically significant” words are in bold.

Links to Report, Data, Code, and Presentation

- GitHub Repository (Contains all data, code, final report and slides presentation)

- Final Report

- Slides Presentation

Cô Cindy, tôi đang mong Cô trả lời về email của tôi đã trình bày trường hợp P. Lê Công Đắc. Vậy mong Cô xem và cho tôi biết ý kiến mà trong bài Comment tôi đã viết về Catholic Việt Nam. Cám ơn Cô Cindy.

Cô Cindy, thành thật cám ơn Cô vì nhờ trang Book Review, Giáo Sư Keith đã email cho tôi và mời tôi chuyển email của Ông cho Taybuiblog ̣để giải thích sự hiểu lầm của Giáo Sư về Cha tôi Petrus Lê Công Đắc. Chúc Cô và gia đình mọi sự an lành và may mắn trong Năm Mới. Chào Cô. Huynh Lê.

Cô Cindy, tôi có viốt lại comment bằng tiếng Anh nhưng không biếr gửi cho Cô bằng email nào. Mong Cô cho biết để tôi chuyển qua. Cám ơn Cô. Huynh Lê